If your brain hurts from thinking too much

It might be time to try something different

The latest AI jump scare is Mythos. Mythos is Greek and where the word Mythology comes from.

AKA “the whole story.”

Mythos is the name of Anthropic’s latest AI model. Unlike their prior models released with fanfare and hyperbole, they’re not releasing Mythos (at least not yet).

They claim it’s too dangerous.1

From Anthropic’s national security team:

During our testing, we found that Mythos Preview is capable of identifying and then exploiting zero-day vulnerabilities in every major operating system and every major web browser when directed by a user to do so. The vulnerabilities it finds are often subtle or difficult to detect. Many of them are ten or twenty years old, with the oldest we have found so far being a now-patched 27-year-old bug in OpenBSD—an operating system known primarily for its security.

Why oh why would a company build a thing that could set back cybersecurity 30 years, take down the internet, and force a rewrite of every piece of software?

Put a pin in that for a minute and lets talk about OpenBSD.

The B in OpenBSD stands for Berkeley. It’s one of the early Unix 2 Operating Systems, and the very same one I learned to program on when I was a wee sophomore at Cal.

I spent a lot of time down in the basement of Evans hall.

The computer lab had no windows, lit instead by a matrix of florescent tubes, their harsh white light bouncing off row after row of black and white CRT terminal glowing screens. The floor was linoleum and slightly sticky. The lab was powered by all-nighters fueled with caffeine to a Motown soundtrack courtesy of a crowdsourced boombox my professor bought and chained to one of the desks. 3

The room smelled of sweat and — as the semester progressed — a tinge of desperation.

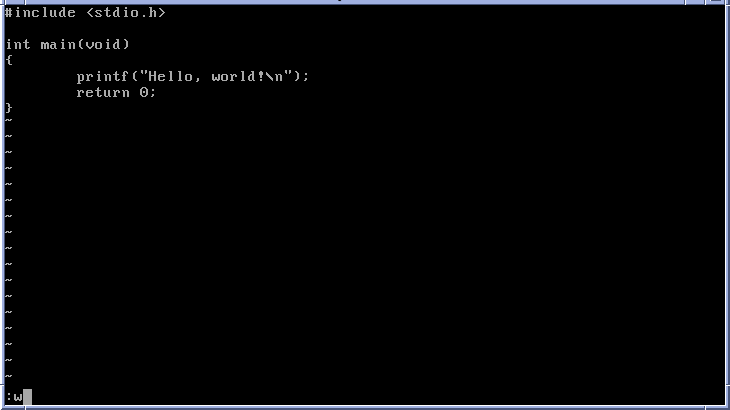

Our programming stack was housed next next door in a room full of mini-computers running OpenBSD Unix.4 We edited our code in this super sexy editor: 5

After a few hours down in that basement you could feel the hummmm of computing within you, around you, through you. You were in the belly of the beast.

Today, in my high school class, the stack is decidedly more abstract. The kids write their programs in an online educational program called Codio. Their code runs in a virtual sandbox on a nameless server up in the cloud in a datacenter far-far-away. The classroom has windows too.

The fundamentals of programming are not different than those I learned forty years ago. But I have to work hard so the programs they write seem even a little bit relevant to what they see on their phones, their laptops, even the school’s website.

I think they have similar real world disconnects across most of their classes. It must be tough — they certainly aren’t of an age where they are just seeking knowledge.

It’s no wonder they’re easily distracted.

A lot of my kids pick up a Rubik’s cube the minute their hands come off the keyboard. I’ve learned they’re still listening to me. Better than they would without the cube. If you look into the why of this, you’ll find Neurodivergent.

The term “neurodivergent” describes people whose brain differences affect how their brain works. That means they have different strengths and challenges from people whose brains don’t have those differences. The possible differences include medical disorders, learning disabilities and other conditions. The possible strengths include better memory, being able to mentally picture three-dimensional (3D) objects easily, the ability to solve complex mathematical calculations in their head, and many more.

— Cleveland Clinic (emphasis mine)

I probably fall under this category too, but who knows? Brains are weird.

Also amazing, so we started building machines to recognize patterns and create things mimicking how we think. The first AI approaches were grounded in traditional programming. Now called symbolic AI, they use logic and rules along with purpose-built knowledge structures to make computers reason step-by-step.

Today’s AI is markedly different and it doesn’t always work.

If you’ve talked to an AI chatbot you know the responses can be wildly variable. It’s part of the novelty and the fun. It’s a prediction machine built on statistics. Two runs with the same exact input can have very different outputs. Hallucinations and inaccuracies prevail.

JFran uses ChatGPT for lesson plans. It’s good at cranking out say “Ten fill-in-the-blank adjective sentences for a third grade bilingual class in a nature unit”

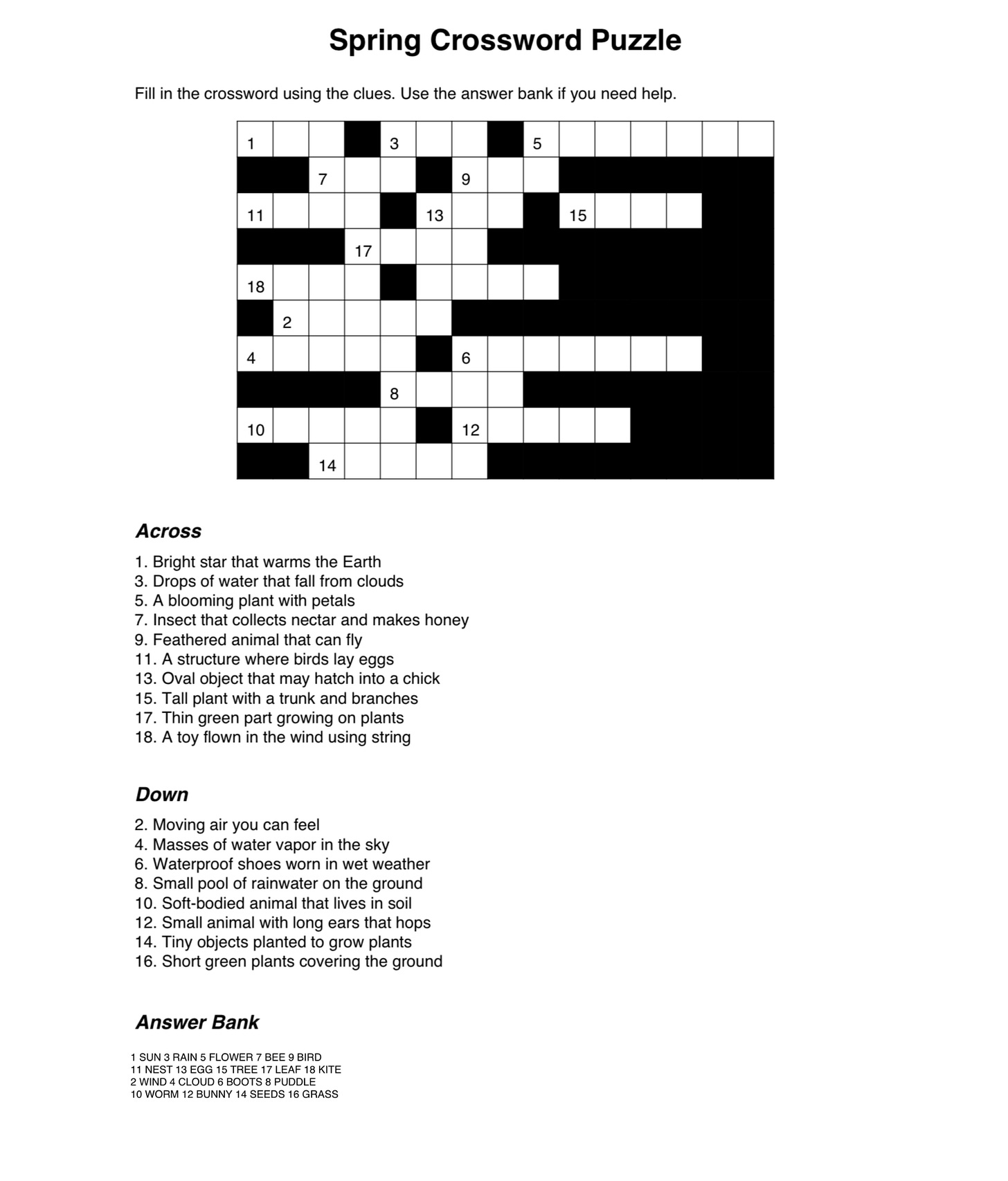

But then she tried to get it to do a simple Spring Crossword puzzle:

If you ignore the paint-it-black on the right, it does resemble a crossword puzzle. But then you realize the words don’t fit and the numbers don’t match and it’s just all a terrible jumble that makes my head hurt.

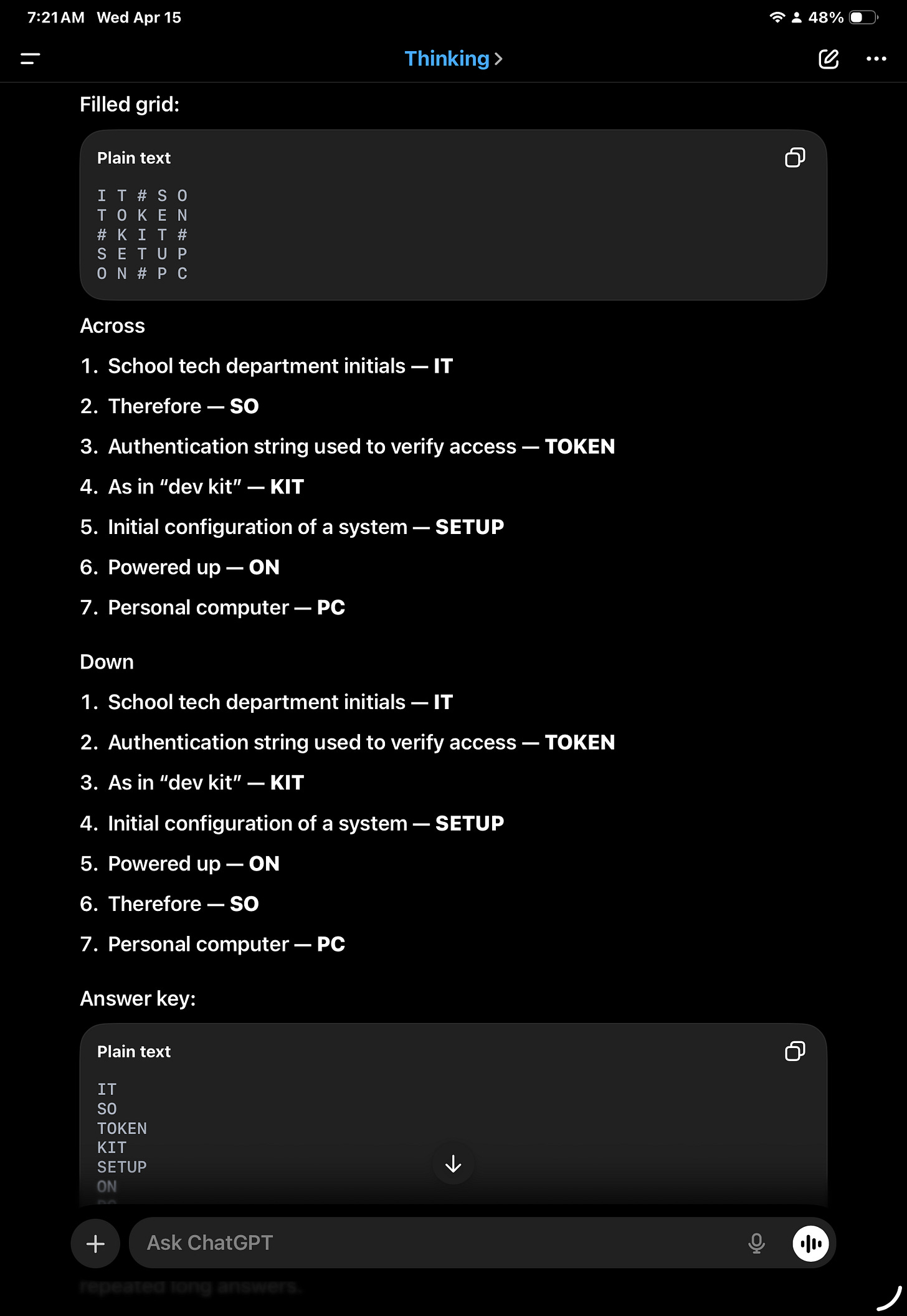

So I tried. This time with a paid subscription and the latest thinking model. I asked for a simple 5 x 5 using computer networking terms. After 14 minutes and 17 seconds of “thinking,” I got this:

Before I started I asked ChatGPT if it could pull off a crossword puzzle:

Yes!

I can make:

quick mini crosswords

standard themed crosswords

easy or hard classroom versions

fill-in style word grids

printable clue lists with answer keys

I can also tailor them to a topic, like AP CSP, astronomy, motorcycles, WordPress, or your own writing. If you want, give me a theme and rough size like 5x5, 9x9, or 15x15.

I questioned it’s confidence:

Are you sure? I tried before and you were repeatably incorrect in length of words, letters shared by words, the grids connecting, etc. It’s ok to say if you can’t do it, that’s fine.

It got shy:

Yes—your criticism is fair.

I can make crossword puzzles, but I am not reliably perfect at constructing them by hand in one shot, especially for larger true crosswords where every crossing, word length, symmetry, and connectivity must all be exact.

This is the madness of Generative AI Large Language Models.

The AI labs introduced thinking to manage this behavior. That’s where the model interjects rules and stepwise processing to evaluate results before proceeding. As you can see above that doesn’t always work.

Now we have skills and projects where you can memorialize the steps after a successful session/task completion for repetition and keep a running record of resources. This works well for defined tasks without a lot of variance. I use a version of this in my class with a model that’s constrained to operate on a set of curated sources. In my case all the official AP curriculum.

While this is getting better, it’s still not great. There’s a debate between two opposing AI camps: neural networks (statistical predictive models) versus traditional machine learning (symbolic deterministic models) for the future of AI.

Hybrid models are now showing up and it might be the best of both worlds. Think of how you or I might balance our intuition with evaluation when making a decision.

Recently Anthropic, the same company that is scaring the pants off us with Mythos, accidentally revealed a bunch of their source code on the internet (hehe). It was Claude Code (code) — a very popular AI coding tool.

Analysis of the leak pointed to a library with a large and complex decision trees based on traditional IF-THEN statements. So it appears Claude Code is a hybrid architecture combining new and old AI techniques.

While I only have passing understanding of all this stuff, one thing I know is the best architecture eventually wins. The example of this I’m teaching my kids right now is the Internet. Out of tons of networks the set of protocols that define the Internet won. That took about twenty years.

Maybe we regroup from developments like Mythos and realize humanity doesn’t need the risk of powerful computing systems that are a black box to us. We need a deterministic layer to make sure certain rules are always followed.

Like don’t break the Internet.

There’s a lot of irony here (and possibly intentional behavior) after Anthropic and the Pentagon got into a big fight resulting in the DoD claiming they were a supply chain risk.

Unix came from a beast of an operating system called MULTICs. The naming joke back then was that Unix = castrated MULTICs.

That would be professor Doug Cooper who made me love computers. He passed around his hat the first week of lecture collecting enough money to buy said boombox. Professor Cooper was also the author of our textbook Oh! Pascal.

OpenBSD is now very popular with networking vendors — the ones that make the routers and firewalls and other security gear that plumb the Internet. Exactly the stuff you don’t want breaking due to rouge AI.

Colon commands at the bottom of the screen were for stuff like saving. :w was write, :wq was write and quit. Then keys like h j k l were used for left, down, up, right to edit your code on the screen. No mouse.

I can't tell you how many times I had to correct my brain from seeing "OpenBDSM."

These kids you're teaching are doing incredible things.

The thing that gets me about Mythos is the casual mention of a 27-year-old OpenBSD not to be confused with (OpenBDSM) bug, as if that's just a regular Friday. Someone at Anthropic found a vulnerability older than most of their engineers, and the appropriate response was apparently to write a press release instead of patching it and never speaking of it again. The fact that they're telling us about it means either they're being admirably transparent or they want us scared enough to pay attention to AI safety legislation. Oh wait....what AI safety legislation???

Happy Friday Andrew.